Optimization, advanced process control and performance monitoring are possible via simulation tools

Software advances, including faster computations and ease of use, are opening new doors for simulation software applications in chemical process industries (CPI) facilities, allowing processors to extend the plant model beyond design and into operations for endeavors such as optimization, advanced process control, performance monitoring and predictive analytics.

Process design was the earliest application for simulation software. In early iterations, steady-state simulators were used to determine operating parameters, constraints and capacity during the preliminary design stage, says Livia Wiley, senior product marketing manager for SimSci with Schneider Electric (Andover, Mass.; www.schneider-electric.com). “However, it doesn’t end there anymore. Once you have that design finalized, it becomes the ‘digital twin’ of the plant,” she says. “You can take that model of the plant and apply it to a dynamic-simulation product, which allows you to input real plant data into the simulation environment and, using the simulated facility, see how the plant will react, in realtime, to any changes you might make, as well as understand what happens when you have a shutdown, switch from summer to winter conditions, have an emergency situation or other scenarios.

“The ability to apply plant data and have the model function as the plant also permits processors to use simulation tools to get into optimization of the plant and, further, into functions such as augmented reality, predictive analytics and other Industrial Internet of Things (IIoT) applications,” says Wiley. “This is the lifecycle of process simulation as we know it today.”

Simulation for optimization

“Today, processors are really looking to get the most out of the facilities they have, so efficiency is the ultimate goal,” says Luke Addington, business development manager with Bryan Research and Engineering (BRE; Bryan, Texas; www.bre.com). “So using simulators for plant optimization in terms of recovery, improving processes and minimizing costs is becoming more important.”

When used for plant optimization, simulators are able to take into account the parameters for all the units in the facility, explains Schneider Electric’s Wiley. For example, in a petroleum refinery, the model would contain all the operating conditions on different units and pieces of equipment in the refinery and the optimizer would bring all those parameters together — including constraints, operating ranges and so on — and allow users to optimize the facility based upon a certain set of defined objectives.

“Optimization looks at the entire refinery and, given the constraints on each piece of equipment, allows the user to say, ‘If I use this feed in the process and have these constraints, how do I maximize the facility for the highest yield from this specific reactor?’” she says. “You can run a plant without a process optimization simulator, but I’m not sure you want to because the point is to get the most profit out of your plant. Optimization shows you all the things you can do to get the most economic benefit out of your facility.”

Today’s simulation allows processors to improve operations by running “what-if” scenarios regarding optimization of safety, asset availability and energy efficiency. “Using simulated models that take into account the plant data and the plant parameters allows users to examine a variety of situations and determine the absolute best and most profitable way to operate the plant at the optimum point,” says Schneider Electric’s Wiley. “At the end of the day, the benefits of optimization are profits.”

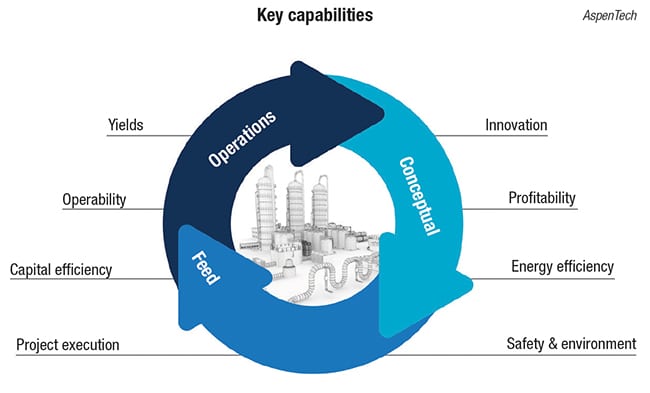

Vikas Dhole, vice president of engineering product management with AspenTech (Bedford, Mass.; www.aspentech.com) adds that because there are several elements to optimization, including operability, capital efficiency, safety, energy efficiency and environmental efficiency, there is now a focus on simultaneously addressing all the elements of optimization. “No one says: ‘Today I want to optimize energy and tomorrow I want to optimize safety.’ So the focus of the software needs to be on allowing users to run all of these optimization scenarios at one time,” says Dhole.

For this reason, there is a move from the traditional simulation paradigm of modeling different authorities one at a time to concurrent optimization where the user is not just modeling, but simultaneously modeling to get energy efficiency, safety and capital efficiency information in one fell swoop (Figure 1).

Figure 1. There is a move from the traditional simulation paradigm of modeling different authorities one at a time to concurrent optimization where the user is not just modeling, but simultaneously modeling to get energy efficiency, safety and capital efficiency information in one fell swoop

Another change in simulation tools is that many users are interested in more narrow or defined optimization at the asset or system level. “We are seeing a move from process optimization to asset optimization, which allows users to see inside the equipment and look at it in a holistic way, not just from design and operation, but also from a maintenance and reliability point of view,” explains Dhole.

Ahmad Haidari, global industry director for process, energy and power industries with ANSYS (Canonsburg, Pa.; www.ansys.com) agrees that simulation at this level is a growing — and helpful — trend. “Engineering analysis or engineering simulation at the subsystem level allows processors to answer difficult questions about maintaining certain systems and then gives them the confidence to optimize based on those answers,” Haidari explains. He cites an example of how simulation can assist with maintenance issues: “In a refinery, erosion can cause plant shutdowns and require intensive maintenance, which can take weeks and is expensive. By using simulation, we can understand where erosion may exist due to the velocities of particulates that create erosion and then, during the next scheduled maintenance shutdown, tend to that area before it becomes a problem,” he says. “Simulation can also be used in this case to find a way to prevent erosion, perhaps by using less catalyst. Whatever scenarios they are considering can be run in the simulator without any risk to the actual process.”

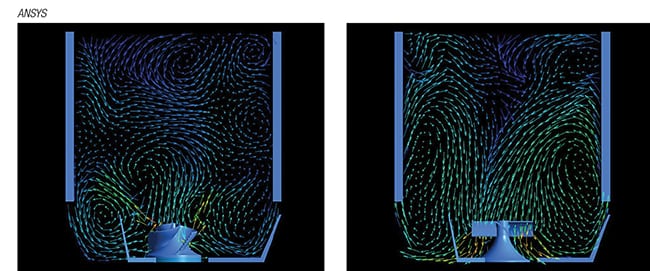

Another way processors might use simulation at the system level is to answer questions about scaleup. For example, says Haidari, engineers already have knowledge about how to scale up a mixing tank, but they may not understand the startup forces of that particular tank or they may want to know if they can use the tank for other applications or increase production or the rate of reaction. “Given a set of operational questions, you can get the data and the answers to these questions for that mixing tank by using a simulations,” he says (Figure 2).

Figure 1. There is a move from the traditional simulation paradigm of modeling different authorities one at a time to concurrent optimization where the user is not just modeling, but simultaneously modeling to get energy efficiency, safety and capital efficiency information in one fell swoop

In other words, given a specific set of questions, simulation provides insight. “The value you get out of simulation and insight is that it efficiently and effectively provides data because it has the ability to quickly provide answers to questions that were previously obtained by running lengthy experiments. Simulations allow engineers to narrow down their search, find the desired results and more easily optimize the system for the best results,” says Haidari.

Drilling down further, simulation tools also exist for performance monitoring of specific sets of equipment, such as heat exchangers, providing very rigorous, accurate models, says David Gibbons, director, engineering software development, with HTRI (Navasota, Tex.; www.htri.net). “Oftentimes after a plant is built and operating, processors find they have a problem with a heat exchanger that is not performing as expected and it reduces throughput. Alternatively, users may need to change process conditions and be sure their heat exchanger is capable of handling the new conditions. In these cases, a specialized, rigorous model is more helpful than the native, simple heat-exchanger models that are provided with most simulation tools,” says Gibbons. “By using specialized software for heat exchangers, you can call up the detailed rigorous models that would be relevant to the equipment you are troubleshooting, which gives you the benefit of accuracy when trying to determine how the heat exchanger performs as conditions change.”

He adds that there is a continuing need to find a way to understand fouling and when to clean heat exchangers, so monitoring heat-exchanger performance is an important area. More rigorous models, based on historic data of heat-exchanger performance, as well as data from the plant, can help users determine how and where fouling is occurring. It is only by looking at the network of heat exchangers as a whole, that users can optimize which heat exchangers to clean. “There’s a huge amount of money lost through fouling and the better they are able to deal with that, the more money they can save from lost revenue and downtime. That is more profit they can make at the end of the day.”

Better control

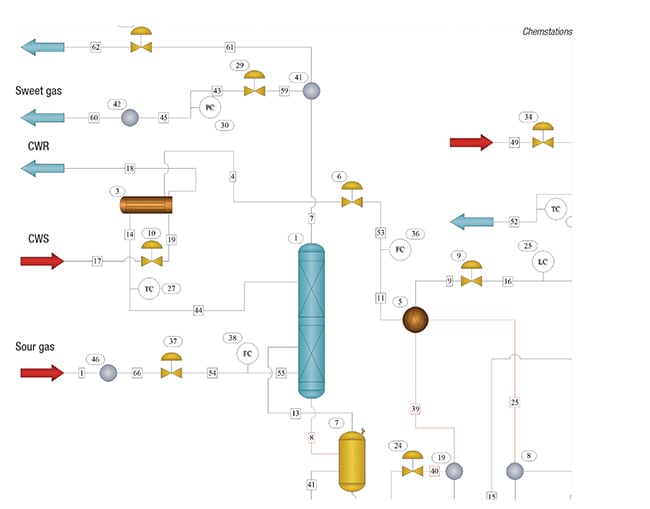

Another trend in simulation, says Steve Brown, president of Chemstations (Houston; www.chemstations.com), is the demand for a simulator arrangement where there is a “virtual plant” running alongside the actual plant, calculating optimal conditions, allowing users to compare optimal versus actual and displaying it to the operator who may not have experience with simulation tools. “In these cases, the operator doesn’t need to understand thermodynamics or the calculations or functions that are core to simulation, but he needs to understand what changing values in the actual production facility mean,” says Brown, who’s company is currently working with partners to deliver solutions that will work in this manner. “The partner builds a very complex model of the virtual plant that operates exactly like the facility does, then embeds that technology inside their deliverable, which may include an interface like a SCADA [supervisory control and data acquisition] or Excel that is accessible to teams that don’t need to know anything about simulation to use it.”

He says this provides a true production environment, which can be employed to build a “decision thinking environment” that not only measures what is happening in the actual plant, but also what the virtual simulation engine is calculating, and deliver a dashboard to the management of the company so they can make decisions about how to best operate. “I would call it advanced process control using a virtual plant that is based on first-principle simulation.”

While this has been attempted before, Brown says calculation speeds have caught up, allowing simulators to calculate fast enough to keep up with the distributed control system (DCS). “The DCS is constantly polling all of the data sources and if the simulator can’t keep up, there’s a hole in the system and lagging, so users can’t make decisions based on that. But with increases in speed, our simulation can deliver results as fast as needed to make realtime decisions and do advanced process control,” he says.

Every plant, he says, is subject to variability in terms of raw material quality, ambient temperatures, humidity and so on, and typical control systems are one way to address these variables. “However, advanced process control that has access to rigorous first-principle simulation allows management to find alternative operating conditions that are global optimum conditions, opening the door to massive savings in energy and other efficiencies over a wide range of variable operating conditions,” explains Brown.

This type of simulation can also be used for complex functions such as calculating the composition of stream, says Brown. “Typically to measure the composition of the stream requires an online analyzer, which is very difficult and expensive to maintain, but the simulator is capable of calculating the composition very easily given all the inputs from the plant itself, so it serves as a ‘soft sensor’ because it is capable of very fast, rigorous and accurate calculations. But there is no need for the simulation environment to be visible” (Figure 3).

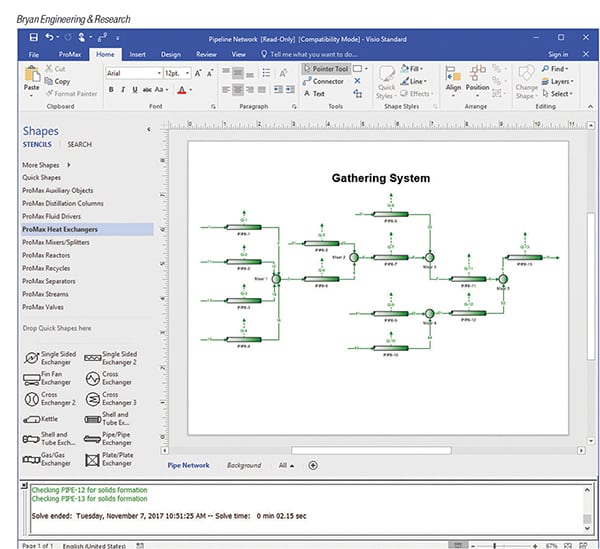

Figure 3. First-principle simulations can interact with APC tools via OPC or even Microsoft Excel to operate as virtual plants and soft sensors

BRE’s Addington agrees that there is a growing demand for automated simulation. “We see more people in engineering wanting to sit down and solve engineering problems, but they don’t want to spend time building models or getting them up to date. Automating the simulation allows them to do this. They might have the simulation set up to grab operating data straight from the historian, allowing the engineer to sit down and immediately start looking for bottlenecks or areas of optimization. They may also have the simulator set up so that it runs automatically to fill in the blanks of their instrumentation,” he says.

For example, it might be used to continually monitor a pipeline system for liquids drop out using measured compositions and flows. “We can calculate the phase envelope for the mixtures and feed that back into the system so operators can see how close they are to the cricondentherm [a dewpoint temperature]. It is something they want to know in real time, but it’s not something they can go out and measure. However, the simulator can calculate this based on the data,” he explains (Figure 4).

Figure 4. Automated simulations can be used to continually monitor a pipeline system for liquids drop out using measured compositions and flows. The phase envelope for the mixtures can be calculated and fed back into the system so operators can see how close they are to the cricondentherm. These data are something they want to know in realtime, but it’s not something that can be measured. However, the simulator can calculate this based on the data

Results from these types of simulations can be used as accurate information for decision making or, in other cases, for optimization. “When there are dozens of variables they can adjust in an effort to improve performance, it can be difficult and time consuming to determine what move to make. The simulator can be set up to run automatically, pull in historical information and calculate the optimum,” Addington says. “This gives operators a direction to look at to determine if it is worth making changes to the process and if those changes will increase the bottom line. It gets information in their hands that they otherwise might not have, allowing them to make better engineering decisions.”

Taking simulation into the IIoT

A growing trend, says Schneider Electric’s Wiley, is to use simulation for applications such as augmented reality and artificial intelligence (AI). “These are all extensions of simulation, automation, advanced process control and optimization that are leading us closer to the Industrial Internet of Things (IIoT), or Industry 4.0,” she says. “Because many process facilities are already so automated, have the sensors and instrumentation and have already captured the models, they are in a position to take those data and that model and apply things like augmented reality for maintenance, training and predictive analytics.”

While the applications sound futuristic, they are already beginning to creep into industry via virtual-reality and augmented-reality-based operations used for training and maintenance. “We see it happening now where a digital work order is created and maintenance techs go into the field with a tablet, take that device, place it in front of the unit and the simulation superimposes the data, schematics and instructions right on their view.”

Called immersive simulation, mobile devices coupled with simulation are able to augment the actual view. “It’s all in a real environment and it gives the end user that extra help, which improves productivity and lowers maintenance costs,” explains Wiley.

She adds that by digitizing the operation, it is possible to use this augmented reality and AI for predictive analytics, as well. “The idea is to predict the failure before it occurs. The simulator can apply the rules and the data and understand when a piece of equipment will alarm so it can be addressed before that occurs. With the technology that is already available — we have all the pieces and the ability to plug it all together — augmented and virtual reality for these types of IIoT applications will grow quickly.”